Blocked After Sending Nudes? The Heartbreaking Truth About Instagram Privacy!

Have you ever wondered what happens when you send intimate photos on Instagram? The digital world can be a dangerous place, especially for young people navigating online relationships. When private moments become public without consent, the emotional damage can be devastating. Instagram's new privacy measures aim to prevent exactly these heartbreaking scenarios, but understanding the full picture requires diving deep into how the platform handles sensitive content and what protections are truly available.

The Growing Crisis of Online Sexual Exploitation

This story originally appeared on Quartz, highlighting a disturbing trend that has been growing across social media platforms. Meta, Instagram's parent company, announced on Thursday a groundbreaking new tool for Instagram Direct Messages designed to protect children and teenagers from predators attempting to elicit nude photos and subsequently "sextort" them. This new feature represents a significant step forward in the ongoing battle against online sexual exploitation.

According to recent studies, nearly 1 in 7 young people have been threatened with having their private images shared without consent. The psychological impact of such threats can be severe, leading to anxiety, depression, and in extreme cases, self-harm. Meta's testing of new tools to protect teens on Instagram from sexploitation, nude images, and potential encounters with pedophiles comes at a critical time when digital safety for minors has never been more important.

- Rick Ross Sex Scandal Leak Threatens His 2026 Net Worth Insider Secrets Revealed

- Chaka Khans Nude Financial Truth Exposed From Riches To Rags

- Leaked Sharon Osbournes Nude Photos Surface Online Today

Instagram's New Safety Features: A Closer Look

Instagram is preparing to roll out a new safety feature that automatically blurs nude images in messages, as part of comprehensive efforts to protect minors on the platform from abuse and sexploitation. This technology represents a significant advancement in automated content moderation, using sophisticated algorithms to detect potentially harmful content before it reaches vulnerable users.

The feature works by analyzing images sent through Direct Messages using machine learning models trained to recognize nude content. When such an image is detected, the system automatically blurs the image before it appears in the recipient's inbox, giving the recipient time to consider whether they want to view the content or report it. This extra layer of protection is particularly crucial for users under 18, who may be more susceptible to manipulation or coercion.

Meta's Multi-Layered Approach to Protection

Meta will send out notifications encouraging adults to enable the prompts for this new feature, recognizing that protection shouldn't be limited to minors alone. The company understands that adults can also be victims of sextortion and revenge porn, and expanding these protections to all users creates a safer environment for everyone.

- Gina Caranos Net Worth Leak Exposes Shocking Sex Scandal Secrets

- The Secret Bond Between Leaked Nudes And Their Victims Emotional Rollercoaster Exposed

- Glorilla Net Worth 2026 Leaked The Nude Truth Behind Their Billions

According to the Wall Street Journal, Meta has started automatically detecting nude photos in DMs across all user accounts. This detection system operates on-device rather than in the cloud, meaning that image analysis happens on the user's phone before the image is even sent. This approach addresses privacy concerns while still providing protection against harmful content.

How the Nudity Protection Feature Works

To help address the growing concern of online sexual exploitation, Meta will soon start testing its new nudity protection feature in Instagram DMs. The feature blurs images detected as containing nudity and encourages people to think twice before sending nude images. When a user attempts to send a nude image, they'll receive a prompt asking if they're sure they want to send the content, along with information about the potential risks.

Instagram will automatically blur nude images in direct messages sent to users under 18 by default. Adult users will receive a notification encouraging them to turn on the feature, though it won't be enabled automatically for them. This graduated approach recognizes that while minors need maximum protection, adults should have the choice to enable or disable safety features based on their preferences.

The Technology Behind Image Detection

Instagram says it is testing new features aimed at protecting young people and combating sexual extortion, including automatically blurring nudity in direct messages. The technology uses advanced computer vision algorithms that have been trained on thousands of images to accurately identify nude content while minimizing false positives. The system is designed to be sensitive enough to catch harmful content while avoiding unnecessary censorship of artistic or medical images that might contain nudity.

The detection process happens in real-time, with the analysis occurring on the user's device before the message is sent. This on-device processing ensures that Meta doesn't have access to the actual content of the images, addressing privacy concerns that might otherwise prevent users from adopting the feature. The blurred images are still viewable if the recipient chooses to unblur them, but the initial blurring serves as a crucial moment of pause.

The Broader Context: Online Safety in the Digital Age

At a Pentagon briefing on the war with Iran, Defense Secretary Pete Hegseth said the US will not relent until "the enemy is totally and decisively defeated." While this statement addresses a completely different context, it metaphorically captures the approach needed to combat online sexual exploitation. The fight against predators and abusers requires unwavering commitment and sophisticated technological solutions.

The digital landscape has created new vulnerabilities that traditional safety measures couldn't address. Predators can now operate anonymously across borders, targeting victims through social media, gaming platforms, and messaging apps. The anonymity of the internet has emboldened bad actors while making it harder for law enforcement to track and prosecute offenders. Meta's new tools represent an important step in creating digital barriers against these threats.

Understanding Instagram's Community Guidelines

Instagram is a reflection of our diverse community of cultures, ages, and beliefs. The platform recognizes that creating a safe space requires balancing freedom of expression with protection from harm. This balance is achieved through comprehensive community guidelines that outline what content is acceptable and what crosses the line into harmful territory.

We've spent a lot of time thinking about the different points of view that create a safe and open environment for everyone. Meta's approach to content moderation is guided by the principle that safety and free expression can coexist when implemented thoughtfully. The community guidelines are designed to be clear and transparent, helping users understand what they can and cannot post while providing mechanisms for reporting violations.

Taking Action: Your Rights and Responsibilities

You can anonymously report photos that go against Instagram's community standards. This reporting feature is crucial because it allows users to take action without fear of retaliation. When you report content, Instagram reviews it against their community guidelines and takes appropriate action if violations are found. This might include removing the content, disabling accounts, or in severe cases, involving law enforcement.

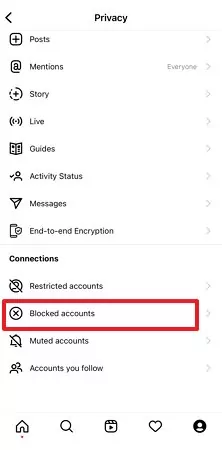

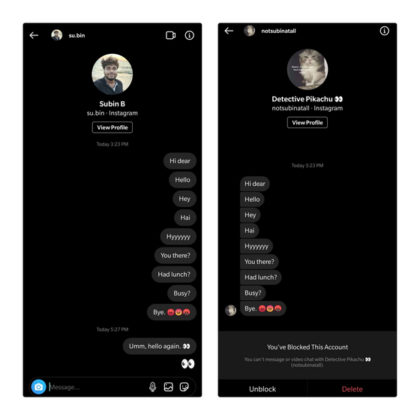

If someone is threatening to share things you want to keep private, it's essential to know that you have rights and options. Instagram provides tools for dealing with threats and harassment, including the ability to block users, restrict their access to your content, and report threatening behavior. The platform also works with law enforcement when criminal activity is involved.

Protecting Yourself and Others

If someone you care about asks you to share a nude photo or video, or leave Instagram for a private web chat and you don't want to, tell the person that it makes you feel uncomfortable. A genuine friend or partner will respect your boundaries and feelings. If this person really cares about you, they will understand your hesitation and won't pressure you to do something you're not comfortable with.

If anyone tries to threaten or intimidate you into sharing photos or videos, just refuse. Remember that threats and coercion are forms of abuse, and you don't have to comply with someone who is trying to manipulate you. Document the threats, report them to the platform, and consider involving law enforcement if the threats are severe or persistent.

Building a Safer Online Community

We created the community guidelines so you can help us foster and protect this amazing community. Everyone has a role to play in creating a safer online environment, from platform developers to individual users. By understanding the tools available, knowing your rights, and supporting others who may be in vulnerable situations, we can collectively reduce the harm caused by online exploitation.

The new features being tested by Instagram represent an important evolution in how social media platforms approach user safety. By combining technological solutions with educational resources and reporting mechanisms, Meta is working to create an environment where users can connect and share without fear of exploitation or abuse.

Conclusion: The Path Forward

The heartbreaking reality of online exploitation has driven Instagram to implement these new protective measures, but technology alone cannot solve this problem. While features like automatic nudity detection and image blurring provide important safeguards, the most effective protection comes from educated, empowered users who understand their rights and know how to respond to threats.

As these new features roll out across Instagram, users should familiarize themselves with the available tools and resources. Whether you're a parent concerned about your child's online safety, a young person navigating digital relationships, or an adult user who wants additional protection, understanding how these features work and how to use them effectively is crucial.

The fight against online sexual exploitation requires vigilance, education, and the willingness to speak up when something doesn't feel right. By working together and taking advantage of the safety tools available, we can create a digital environment where everyone feels safe to express themselves without fear of exploitation or harm. The path forward requires continued innovation, strong community guidelines, and most importantly, a commitment from all users to look out for one another in the digital space.